How I Ran Regression Tests on Nepal's Biggest Fintech App Using Passmark + Playwright

I described what to test in plain English & Passmark + Playwright handled the rest. Here’s what I found while testing eSewa — Nepal’s most popular digital wallet.

Software Developer 👩💻 | Technical Writer 📝 | Student 🎓

Introduction

Can AI test Nepal’s biggest fintech platform using plain English? I tried.

Modern web applications move fast, but testing often struggles to keep up. A small UI update, renamed button, or layout change can break traditional automation and create unnecessary maintenance work.

That’s where things get interesting.

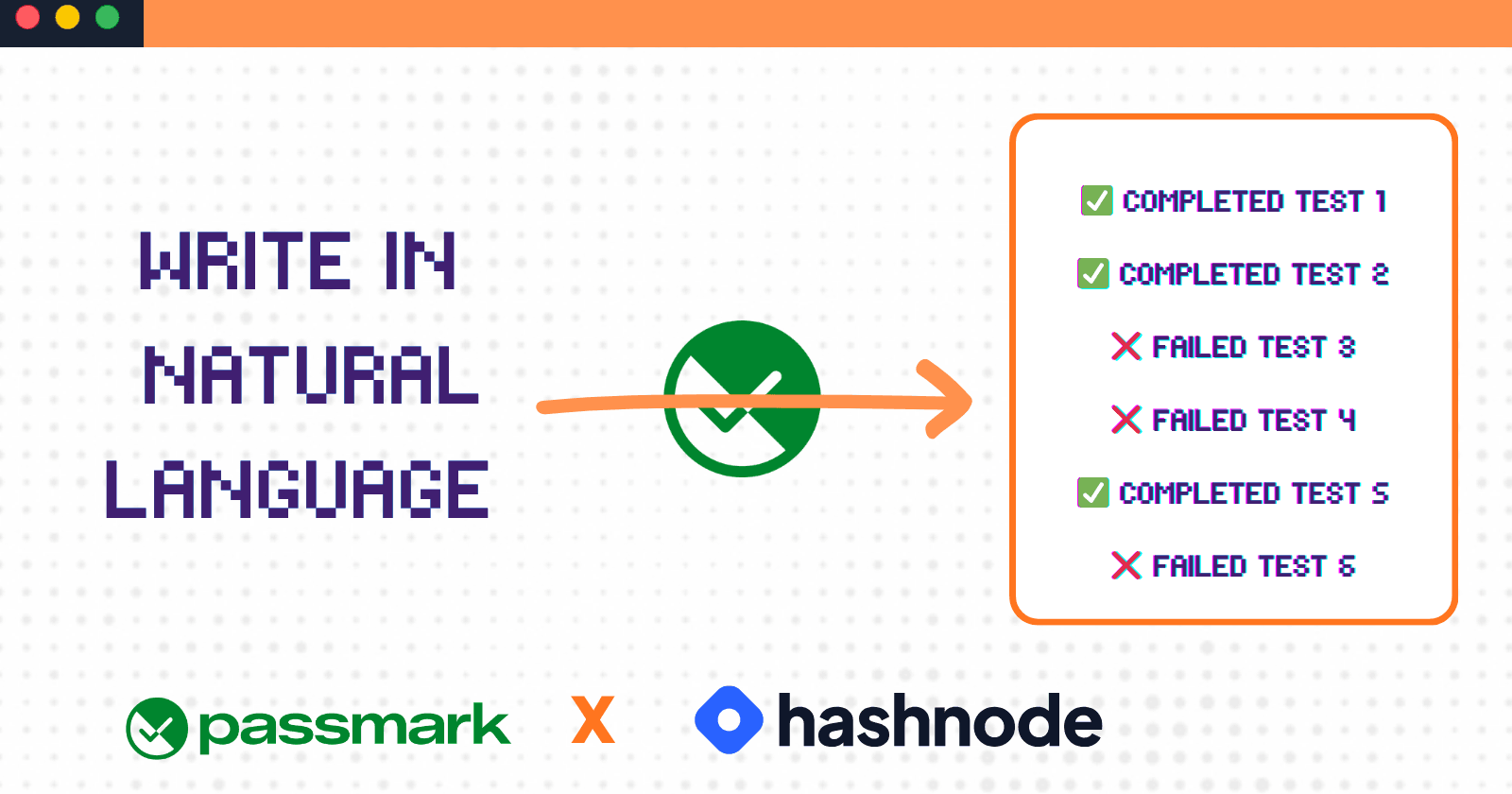

What if testing didn’t require writing complex scripts at all? What if we could just describe what we want to test… in plain English?

That’s exactly what Passmark enables.

For this experiment, I chose eSewa, one of Nepal’s most widely used digital wallet platforms trusted for payments, transfers, and everyday transactions. To make it happen, I used Passmark for plain-English AI testing flows and Playwright for browser automation.

In case you want to view the demo video directly. 👉 Demo Video

Why I Chose eSewa ?

When selecting a target for this hackathon, I wanted to work on something practical and meaningful.

The hackathon suggested testing any web app, including demo applications, which is great for experimentation. However, I decided to apply Passmark to a real-world use case instead.

That’s why I chose eSewa, one of Nepal’s most widely used digital wallet platforms. Since it handles real payments, transfers, and daily transactions, it represents a more realistic testing environment.

This made the experiment more interesting: How well can AI-powered regression testing handle a real production fintech system?

That question became the motivation for this project.

Project Overview

This project is a regression testing suite built using Passmark + Playwright to test real user flows of eSewa.

Instead of writing traditional automation scripts with selectors or page objects, I used Passmark to describe test steps in plain English, and Playwright to execute them in a real browser environment. The goal was to validate whether AI-driven testing can reliably handle real-world application flows such as navigation, UI interactions, and basic user journeys.

The test suite focuses on core user facing flows, including:

Landing page accessibility and navigation

Key UI interactions and button behavior

Basic user flow validation across pages

Regression checks to ensure stability across updates

Tech Stack

This project was built using a modern testing stack centered around Passmark.

Passmark : This is AI driven testing framework that interprets natural language test steps and manages execution workflows.

Playwright : This is used under the hood for browser automation, navigation, and UI interactions.

OpenRouter API : This provides the AI model gateway for Passmark during test execution

TypeScript : This is used for writing structured and maintainable test files.

Node.js : Provide a runtime environment for running the test suite.

GitHub : This is used for version control.

In simple terms, Passmark handled the test intent, Playwright handled the browser actions, and the AI layer helped to bridge these two. This made the stack powerful while still keeping the developer experience simple.

What I Tested ?

To make this project practical, I focused on real user facing flows across eSewa’s public website. I built regression scenarios around those pages that involves a lot of user interaction and heavy navigation.

1. Homepage & First Impression Checks

I started with the landing experience by validating:

homepage load state

visible eSewa branding and logo

hero section visibility

page title correctness

navigation menu presence

2. Hero Section

I tested the hero section to ensure that key first-screen elements like the headline, CTA button, and banner were visible and correctly rendered.

headline or tagline visibility

call-to-action button presence

hero banner / image rendering

3. Download App Section

Since mobile adoption is critical for wallet platforms, I verified:

App Store download visibility

Google Play download visibility

4. Featured Services Interaction

To check how easily services can be found, I tested:

navigating to service detail pages or modals

service title visibility

description visibility

back / close interaction availability

5. Footer Integrity

I also checked important footer content such as:

contact information

social media links

copyright text

6. Login Validation Flow

For authentication UX, I tested empty field submission scenarios to verify:

login modal presence

phone number field visibility

password field visibility

sign-in button rendering

validation or error feedback for empty inputs

7. Navigation Regression Checks

I tested movement between major sections including:

Services

Merchant / Business

Help / FAQ

About Us

returning back to homepage via logo navigation

Test Architecture & Code Snapshot

Rather than placing every scenario into a single large file, I structured the suite into smaller focused test groups. This made the project easier to maintain and easier to expand later.

Example structure:

esewa-landing.spec.ts→ This file includes all the test related to landing page, hero section, footer, download area.esewa-login.spec.ts→ This contains all the checks and teste cases related to empty field validation and authentication UI checks.esewa-navigation.spec.ts→ This covered tests related to Services, Merchant, Help, About, and navigation back to the homepage flows.

Example :

test("Homepage loads correctly", async ({ page }) => {

test.setTimeout(60_000);

await runSteps({

page,

userFlow:

"Validate eSewa homepage loads with key elements and basic interactions",

steps: [

{ description: "Navigate to https://esewa.com.np" },

{ description: "Click on the eSewa logo" },

],

assertions: [

{ assertion: "eSewa logo is visible in the header" },

{ assertion: "User remains on or is redirected to homepage" },

{ assertion: "Top navigation menu with multiple items is visible" },

{ assertion: "Page title contains the word eSewa" },

{ assertion: "Hero section displays a headline or key message" },

{

assertion:

"Clicking the eSewa logo keeps or brings the user to the homepage",

},

{ assertion: "Navigation menu items appear clickable or interactive" },

],

test,

expect,

});

});

All the test suites are available here (I felt it wouldn’t be a good idea to include all the code directly in the blog).

Note: The goal was not to maximize number of tests, but to ensure coverage of meaningful user journeys.

Challenges I Faced

One unexpected issue I faced was related to the OpenRouter API key setup.

As mentioned in the hackathon instructions, the API key is sent automatically to the registered email after signing up for the Passmark hackathon. I ended up registering twice and received two separate emails with different keys.Without realizing it, I used the older API key in my project, which caused authentication errors such as “no user found” during execution.

After some debugging, I revisited my email, identified the correct key, updated the configuration, and the issue was resolved immediately.

This was a simple mistake, but it highlighted how small configuration issues can completely block a working test suite.

Running the Tests: What Worked, What Broke, and What Surprised Me

After running the test suite on eSewa, the results were not perfectly clean , and that’s where the real insights came from.

1. What Worked ?

Some tests passed fluently without any modification. This was the great motivation to work on this project.

The homepage validation and app download section tests completed successfully, confirming that Passmark handled basic visibility checks and straightforward flows reliably.

These tests involved minimal navigation and fewer steps, which made execution faster and more predictable.

2. What Broke ?

The more interesting behavior appeared in slightly more complex flows.

Several tests failed due to timeouts, including:

hero section content validation

featured service interaction

footer validation

navigation flows like Services, Merchant, and About section.

These weren’t hard failures, they exceeded the default 60 second limit.

This showed that combining multiple steps like scrolling, clicking, and validating content increases execution time significantly in AI driven tests.

I also hit a specific issue on the FAQ page: The page title was visible, but the expected question content was not detected at that moment, causing the assertion to fail.

3. What Surprised me ?

Two things stood out.

First, navigation heavy tests were slower than expected.

I initially assumed simple UI checks would be trivial, but they required more time because of page transitions and content loading.

Second, how much wording mattered.

Small changes in step descriptions, like being more explicit about what to look for, noticeably improved execution reliability.

Video Presentation

After completing and running the test cases, I decided to create a short demo video to push myself further, as I felt a video would be a better way to showcase what Passmark can do.

https://www.youtube.com/watch?v=vixWAl4vjq0

Important Links

Conclusion

Firstly, I was really surprised by the announcement of this hackathon, and I kept asking myself, "how is it possible to test an application using just plain English?" I was used to the idea that testing requires writing detailed test cases and automating browsers using tools like Selenium and other frameworks.

But when I went through the Passmark documentation and GitHub repository, I was genuinely amazed. I was able to write meaningful test cases simply by describing user actions, without complex setup.

Overall, this was a completely new experience for me. Testing an application using this approach changed how I think about automation.

My POV :

AI won’t replace traditional testing overnight, but tools like Passmark show that the future of testing might be less about writing code, and more about describing intent.